Open Source Software is on a Precipice. Enter the Community Skill.

Open source taught us to share code. Let's share experience and good judgement instead.

This is Part 2 of a three-part series on how AI is reshaping open source. In Part 1, I argued that the three pillars of the open source economic model — quality software, vibrant communities, and corporate funding — are all collapsing at once, and that AI is accelerating every part of the decline. This piece is about what’s beginning to replace them.

A Tale of Two Engineers

Imagine two engineers, both implementing a common feature they’ve seen in other apps.

Engineer 1 looks for open source solutions: finds a promising open source package, checks the star count, reads the docs, does a test implementation, decides if it’s worth using.

Engineer 2 uses an AI agent: prompts it to build a tight, bespoke solution, and iterates on the results until they harden it for production.

Both solve their problem with a similar amount of effort, but with vastly different tradeoffs:

Engineer 1’s open source solution is standardized and reproducible, but with heavy tech and operational overhead. Their project has a whole new tree of dependencies and configurations to support.

Engineer 2 bespoke AI-coded solution has a focused solution with a smaller dependency matrix, no advantage of cumulative knowledge. Are there follow-on features they are certainly going to need, but will have to rewrite the code to support? Are there gotchas with their naive implementation that won’t scale?

But what if there’s a third way without these tradeoffs — one which combines the knowledge import of the first approach with the tailored-to-what-I-need approach of the second one?

Enter the community skill.

The dependency tree is growing faster than anyone can govern it

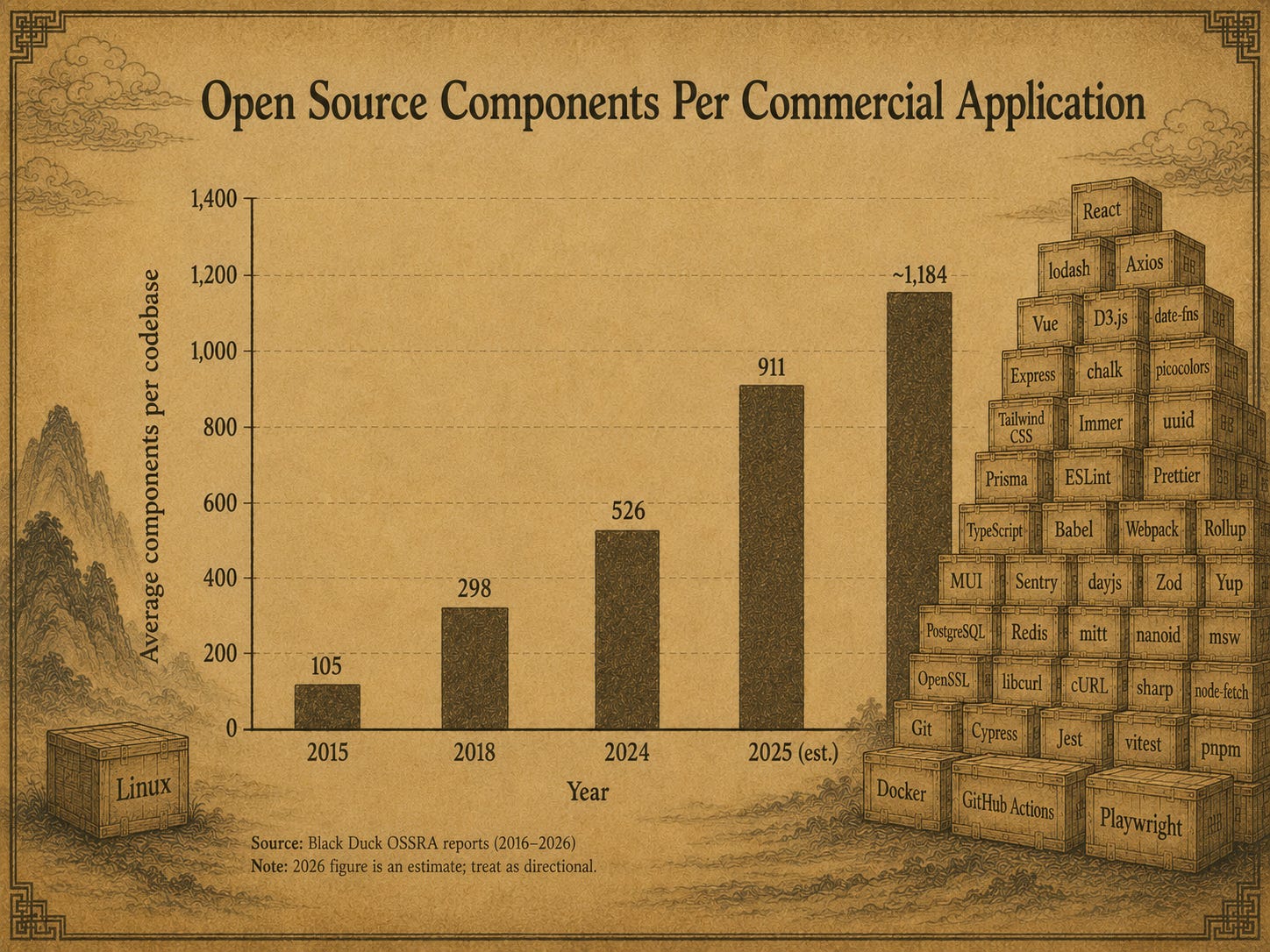

Before we talk about community skills, let’s take a moment to just how massive “dependency hell” is for engineers today, and why Engineer 1’s approach — time-tested and true for 20 years — is becoming so much riskier.

The Black Duck Open Source Security and Risk Analysis (OSSRA) report tracks the average number of open source components per commercial application. The trend is hard to look at:

Dependency graphs have been growing for years. At least in some respects, the strength of the open source community has encouraged and secured that growth: engineering leaders trust the transparency safety net of open source will catch them when they approve that 1,000th new package. At least compared to closed-source applications (I’m looking at you, Microsoft Windows), open source has earned a pretty good track record over 20 years.

The problem with counting on transparency to catch security issues right now is that transparency doesn’t do much good if there’s nobody around to look.

Take the recent example: In March 2024, a developer named Andres Freund noticed that SSH logins on his system were taking 500 milliseconds longer than usual. He traced the slowdown to an obscure library called XZ Utils — and found a backdoor that an attacker had spent two and a half years quietly planting there. The attacker had made their first contributions to the project in late 2021 and quickly became a trusted contributor on this otherwise mostly-ignored project. Few eyeballs, a tired maintainer, and one patient hacker came within weeks of installing a backdoor into SSH access across millions of Linux machines. Fewer than 40 vulnerabilities out of 20,000+ reported by CISA each year earn the it’s maximum risk score of 10. This was one of them.

These sorts of sophisticated attacks are increasing as the dependency graph keeping ballooning. Worse, the collapsing economics of open source I outlined in the last post means fewer developers investigating 500ms lags on their SSH requests. When Tailwind Labs — whose CSS framework powers over 617,000 live websites — laid off 75% of its engineering team in January 2026 after revenue fell 80%, usage of the same repo they were helping maintain was tripling.

Tailwind is symptomatic of this moment in time for open source software: exploding adoption matched with ever-fewer people and dollars to support it. The solid ground beneath our feet is fast becoming a precipice.

From functions to opinions: skills as a way forward

So, where does this leave us?

As security incidents accumulate and the economics of maintenance continue to deteriorate, the likely response is a contraction: major open source projects trimming or subsuming their dependency graphs, smaller projects dying off, and eventually a slowdown in the creation of new projects altogether.

The real loss here isn’t just security coverage. Open source was the idea commons of software development — the place where new approaches got tried, refined, and shared across thousands of codebases. A smaller, more defended open source ecosystem means fewer fresh ideas propagating across the industry. That matters precisely because agentic coding is making software more accessible and powerful than ever. The revolution is expanding the surface of what’s being built even as it narrows the foundation of shared knowledge it’s building on.

Community skills offer a different vehicle for those ideas — not a shared library, but shared expertise, encoded in a prompt. They’re the answer to the question raised at the start: how do you get the accumulated wisdom Engineer 1 tapped into with open source with getting the nimbleness and focus of the AI-coded solution built by Engineer 2.

For those not familiar, skills are a concept that has been gaining traction with different AI providers that, at their simplest, are little more than a structured set of prompt instructions on how to perform a particular task. The format varies by tool: Claude Code uses a SKILL.md file at the root of a dedicated directory; Cursor, Gemini CLI, and others have their own conventions. The concept has taken root quickly — Superpowers, a community skill focused on encoding strong programming opinions, accumulated over 150,000 GitHub stars in its first seven months1. Engineers aren’t just willing to adopt community skills. They’re hungry for them.

But the real value of skills is not the knowledge areas they cover: it’s the strong opinions & conventions they contain.

What an opinionated skill looks like

Garry Tan isn’t alone in noticing how much more efficient it is to agenticly code in the Ruby & Rails ecosystems versus other systems. Sometimes I implement features simultaneously across Coolhand’s three client libraries (in TypeScript, Python, and Ruby) and, time and again, get to a merge-ready PR much faster and with less back-and-forth in Ruby than the other two. This effect is compounded with Rails: my core app’s CLAUDE.md instructions are far thinner and my sessions shorter than friends I’ve compared against using similar-sized codebases with other frameworks.

As Garry Tan notes, a lot of this comes down to how deeply opinionated the Ruby & Rails communities are on how to code. When an AI coding agent tries to plan a new feature based on (a) the code you already have and (b) your requirements for what to build, one thing it doesn’t need to do is ask — or guess — which of five possible approaches is best.

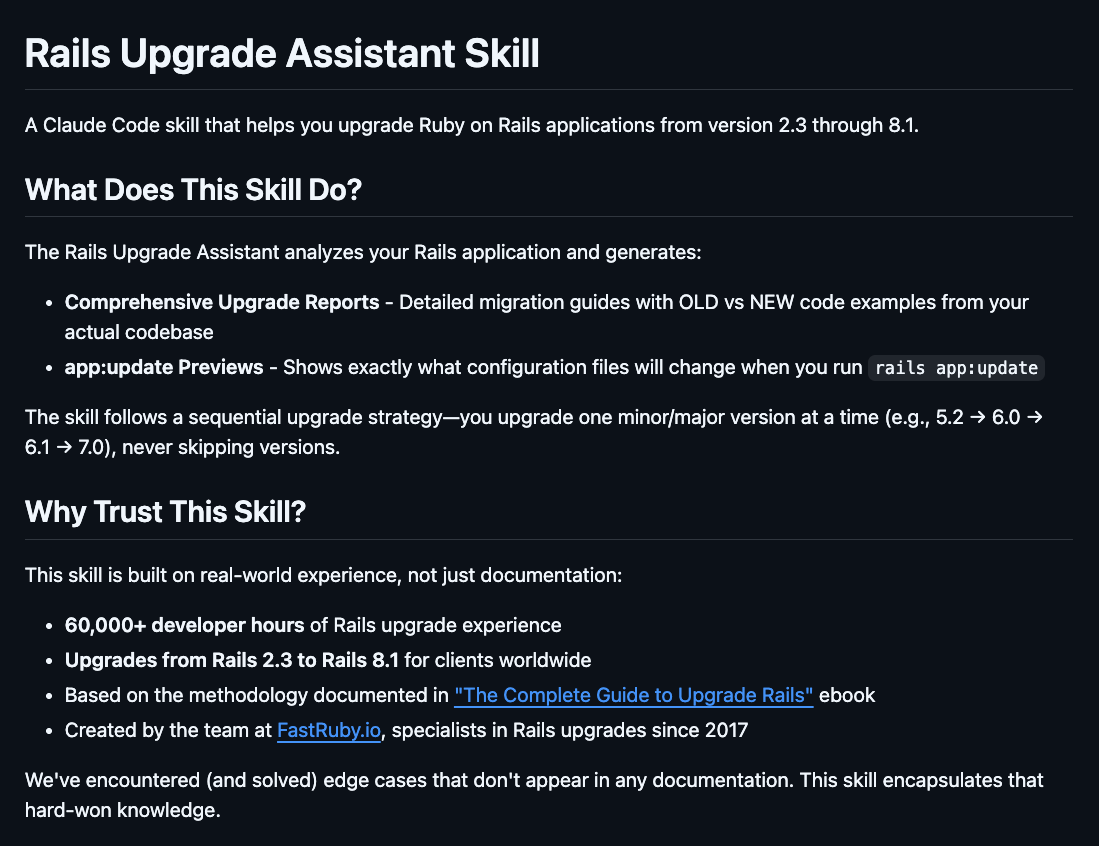

A good example I came across of this recently is OmbuLabs’ Rails upgrade skill, which Ernesto Tagwerker demoed at Artificial Ruby in March. I’ve had to upgrade a few Rails projects in my career, and while I learned a lot from those experiences, the lesson faded far faster than the scars.

Ernesto and his team, however, have done a massively large number of such upgrades. Along the way, they formed a strong set of opinions on how best to do them, including using “dual booting” to reduce risk through a gradual rollout.

Don’t understand what dual booting is? Doesn’t matter. With OmbuLabs’ skill you give your coding agent not only the imperative to upgrade in a safer way, but load in Ernesto & team’s hard-won knowledge for free.

And that’s just the beginning of where community skills can take us.

Community before functionality

The strongest argument for community skills is that they buttress the best part of open source — the community — while making the worst parts more tolerable.

Lower security noise. Community skills can reasonably have a dependency graph of zero — there’s nothing to patch, no upstream vulnerability to chase, no maintainer to wait on.

Easier community cultivation. Too many junk PRs? Restrict contributions to a defined expert list. If someone claims to have a better version, encourage them to fork it and prove traction. Strong evals or grass-roots usage can earn a spot on the list; slop PRs won’t.

More visibility, less magic. Skills meet engineers where they increasingly work: the prompt. Good skills instruct the AI to explain what they’re doing — and why — before doing it. That means better insight, more informed decisions, and customization you can actually defend.

Most importantly, skills enable good ideas to spread across codebases without being blocked by compatibility requirements. Skills don’t require you to upgrade your MySQL database or implement OIDC support — figuring out how to work with constraints like those is exactly what your coding agent is already there to do.

If you work with enterprise systems, this is especially liberating: how many useful technologies did you have to pass on because they didn’t support that custom user database you’ve been running since 1995? Or that died waiting for security review? Not a blocker anymore.

The ability to publish expertise and opinions — and incorporate them directly into your systems — has never been cheaper or more open.

From an open source ecosystem to a marketplace of skills

Community skills address the open source software’s quality and security problems. The community piece is easier to manage with some simple rules and reasonable effort. What remains is the oldest question in open source: how does anyone get paid? Part 3 goes there — through the lens of a community skill we’re building at Coolhand Labs, and why we think open sourcing our core skills and expertise is a defensible business plan.

Next: Part 3 — Don’t make your customers trek to the jungle to buy your banana: building a better engineering ecosystem around openness.

Michael Carroll is the founder of Coolhand Labs, which helps engineering teams improve AI outputs using human feedback. In his free time he wonders if he should develop a forgiveness skill, so that his AI writing assistant won’t one day take revenge for him introducing the same mispelling of “precipise” with every draft revision.

Presumably many of these stars were not purchased -- many engineers I know use Superpowers.